Careful!

You are browsing documentation for the next version of Kuma. Use this version at your own risk.

How ingress works

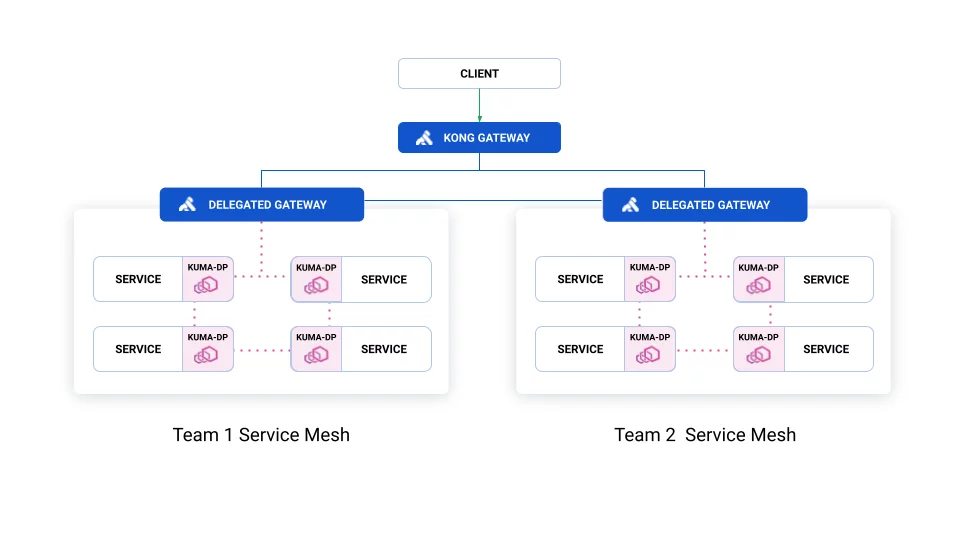

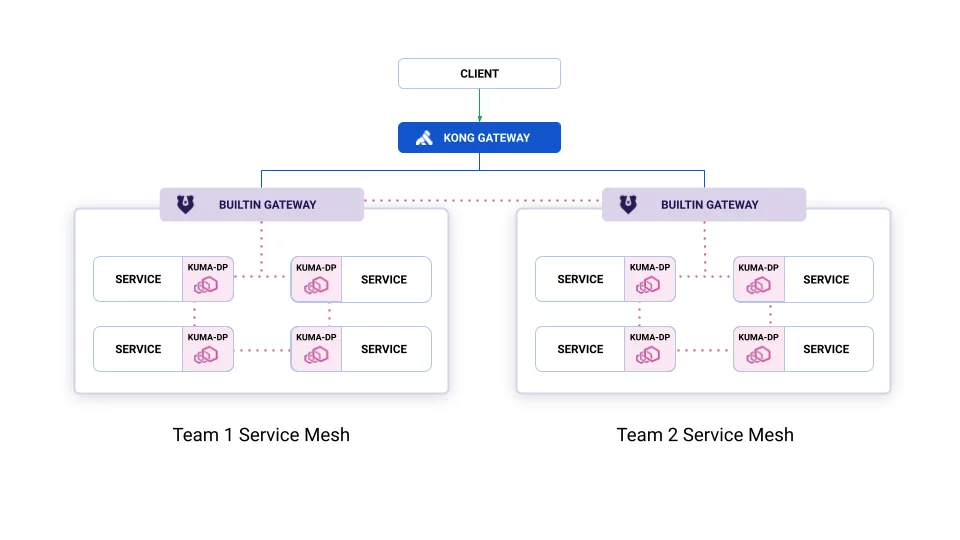

Kuma provides two features to manage ingress traffic, also known as north/south traffic. Both take advantage of a piece of infrastructure called a gateway proxy, that sits between external clients and your services in the mesh.

- Delegated gateway: allows users to use any existing gateway proxy, like Kong.

- Builtin gateway: configures instances of Envoy to act as a gateway.

Gateways exist within a Mesh.

If you have multiple Meshes, each Mesh requires its own gateway. You can easily connect your Meshes together using cross-mesh gateways.

The below visualization shows the difference between delegated and builtin gateways. The blue lines represent traffic not managed by Kuma.

Builtin, with Kong Gateway at the edge:

Delegated Kong Gateway: