Kuma 2.2 Released with OpenTelemetry Support, Offline Token Signing, MeshHTTPRoute Improvements and More!

We’re excited to announce the release of Kuma 2.2. This new minor release adds a lot of traffic control features, more incremental improvements to our UI and policies, and many more minor features and bug fixes.

In order to take advantage of the latest and greatest in service mesh, we strongly suggest upgrading to Kuma 2.2. Upgrading is easy through kumactl or Helm.

Notable features:

- 🚀 OpenTelemetry support for tracing and access logging

- 🚀 Added the ability to define MeshProxyPatch policies using JSONPatch, allowing greater power and flexibility to customize underlying Envoy configuration

- 🚀 Multiple improvements and functionality added to the MeshHTTPRoute policy, including:

- Cross-zone support

- Request mirroring

- Host header rewrites for the MeshGateway

- Header matching

- 🚀 Support for retry predicates and priorities

- 🚀 Additional options for customizing the pods backing a MeshGatewayInstance deployment

- 🚀 Upgraded underlying Envoy version to 1.25 series

- 🚀 New MeshLoadBalancing policy, enabling more granular control of load balancing configuration between services

- 🚀 Official support for deploying a Universal mode global control plane (Postgres-backed) to a Kubernetes cluster for better availability and resilience characteristics

- 🚀 Ability to provide a public key for offline token signing and validation

- 🚀 Various other bug fixes and quality-of-life improvements across Kuma

And a lot more! Checkout the full release notes to see everything in this release.

OpenTelemetry Support

We’re really excited to announce that in 2.2 we’ve released the ability to use an OpenTelemetry collector as a target for both access logs and traces within Kuma. Huge shoutout to our community members who contributed this functionality upstream!

Our support for OTEL means that in both the MeshAccessLog and MeshTrace policies, it’s now possible to specify an openTelemetry type backend:

apiVersion: kuma.io/v1alpha1

kind: MeshAccessLog

metadata:

name: default

namespace: kuma-system

labels:

kuma.io/mesh: default

spec:

targetRef:

kind: Mesh

from:

- targetRef:

kind: Mesh

default:

backends:

- file:

path: /tmp/access.log

format:

plain: '[%START_TIME%]'

- openTelemetry:

endpoint: otel-collector:4317

attributes:

- key: "start_time"

value: "%START_TIME%"

The OTEL collector is a great way to collect log, trace, and metrics data and translate and send that to any external observability vendor tooling.

Head over to the docs to check out how to use the new OpenTelemetry backend with both MeshAccessLog and MeshTrace policies.

Kubernetes Deployments with Postgres Storage

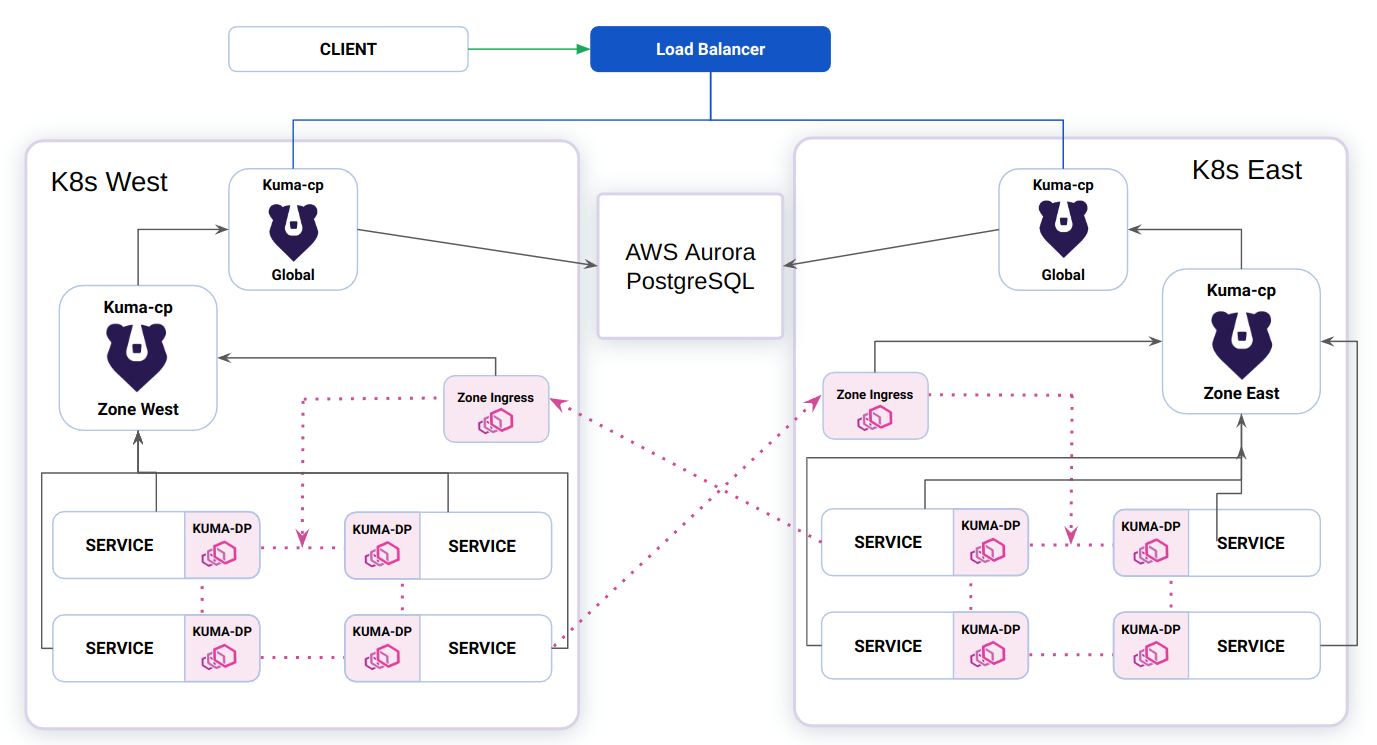

Kuma historically supported two different deployment modes, Kubernetes and Universal (VM / non-containerized). In the former, we use etcd at the persistence layer for configuration in the form of Kubernetes CRDs. In the latter, we utilize an external Postgres database to persist all of the policy and configuration objects. If Kuma was deployed in a cloud provider’s Kubernetes distro, this would likely mean that we were running with more limited HA capabilities as clusters can typically only span multiple availability zones within a region. If an entire region were to experience downtime, the global control plane would also be degraded.

In 2.2, we’re adding built-in support for a combination of the above modes that we’re calling ‘Universal on K8s’. It allows users to deploy Kuma into Kubernetes but pointing to an external Postgres datastore (rather than making use of CRDs), allowing them to span a single deployment across multiple regions, increasing resiliency.

This is a model of deployment we have seen users work towards themselves, so we’re formalizing support for it so it can be utilized by our users in the form of additional Helm chart options and supporting documentation.

Offline Token Signing

In addition to the existing regular flow of generating signing keys, storing them in secret, and using them to sign tokens on the control plane, in 2.2 Kuma also now offers offline signing of tokens. In this flow, you can generate a pair of public and private keys and configure the control plane only with public keys for token verification. You can generate all the tokens without running the control plane.

dpServer:

authn:

zoneProxy:

type: zoneToken

zoneToken:

enableIssuer: false # disable control plane token issuer that uses secrets

validator:

useSecrets: false # do not use signing key stored in secrets to validate the token

publicKeys:

- kid: "key-1"

key: |

-----BEGIN RSA PUBLIC KEY-----

MIIBCgKCAQEAsS61a79gC4mkr2Ltwi09ajakLyUR8YTkJWzZE805EtTkEn/rL2u/

...

se7sx2Pt/NPbWFFTMGVFm3A1ueTUoorW+wIDAQAB

-----END RSA PUBLIC KEY-----

The advantages of this mode are:

- easier, more reproducible deployments of the control plane, and more in line with GitOps.

- potentially more secure setup, because the control plane does not have access to the private keys.

Upgrading

Be sure to carefully read the Upgrade Guide before upgrading Kuma.

Join the community!

Join us on our community channels, including our official Slack chat, to learn more about Kuma. The community channels are useful for getting up and running with Kuma, as well as for learning how to contribute to and discuss the project roadmap. Kuma is a CNCF Sandbox project: neutral, open and inclusive.

The community call is hosted on the second Wednesday of every Month at 8:30am PDT. And don’t forget to follow Kuma on Twitter and star it on GitHub!

Get Community Updates

Sign up for our Kuma community newsletter to get the most recent updates and product announcements.

Thank you!

You're now signed up for the Kuma newsletter.

Whoops!

Something went wrong! Please try again later.